As foundation models (FMs) gain traction in medical imaging, experts are expressing cautious optimism about their potential to transform diagnostics while calling for stringent privacy protections and regulatory frameworks. A recent study published in npj Digital Medicine highlights the dual challenge of using these powerful AI systems for clinical benefit without compromising patient privacy. The research highlights the need for technical, governance, and regulatory interventions to ensure that FMs deliver innovation without exposing individuals to re-identification risks.

Technical Safeguards and Privacy Challenges

The study identifies key technical challenges in deploying foundation models for medical imaging, particularly the risk of re-identification when model outputs are combined with external datasets. Researchers note that current studies often use cohorts with limited diversity, which may not reflect the full spectrum of privacy risks in real-world scenarios. For instance, a feature ablation or attention mapping study could clarify whether a model’s predictive power for demographics is truly separate from the ability to identify individuals.

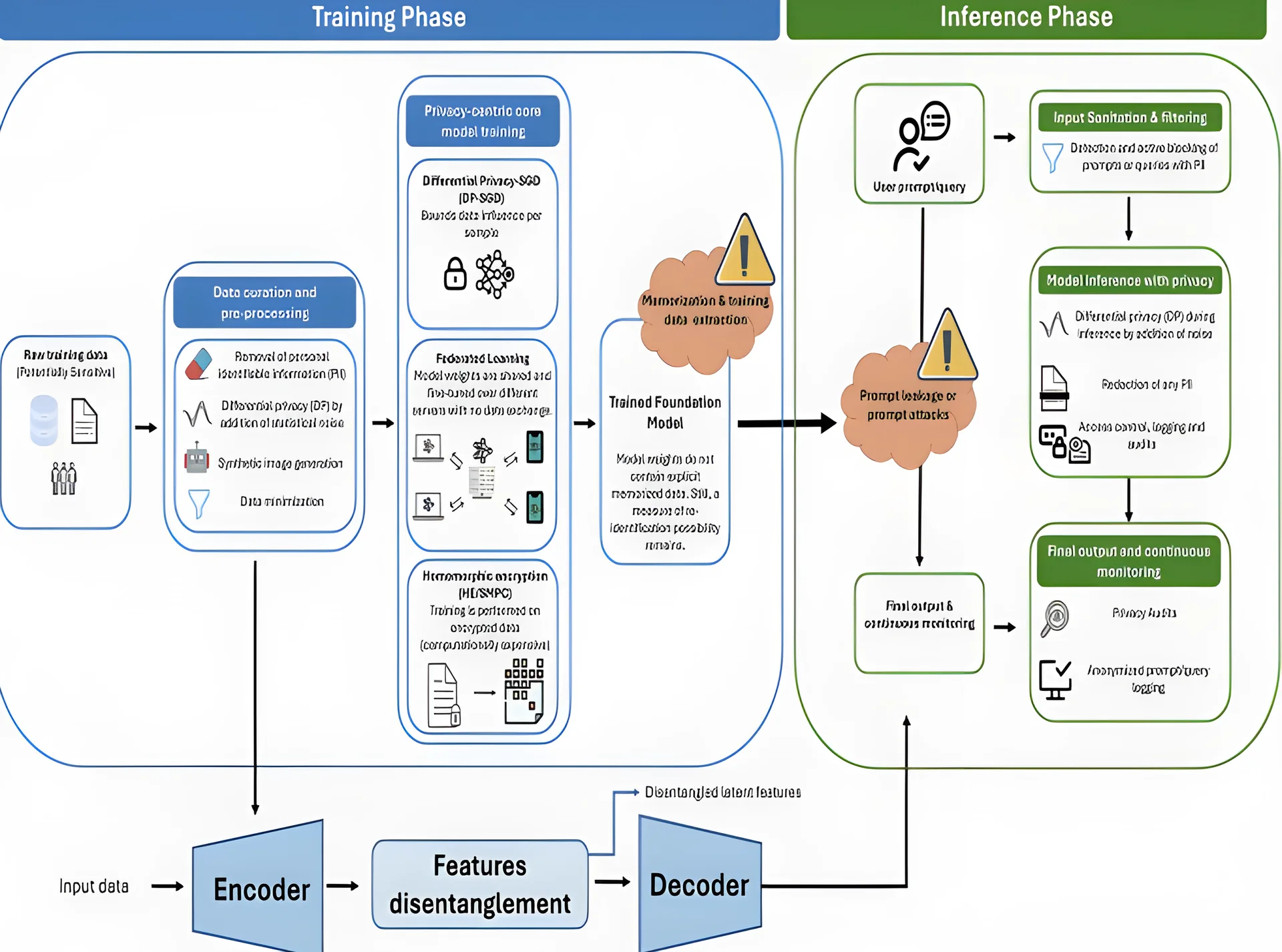

Technical solutions such as feature disentanglement are being proposed to separate clinically relevant patterns from identifying details. This method allows models to focus on disease markers while minimizing exposure of sensitive information. Additionally, federated learning frameworks are being explored to reduce the risk of centralized data breaches by keeping training data decentralized across institutions. Synthetic data generation, when combined with strong privacy guarantees, can further protect individual identities without sacrificing the utility of the models.

Despite these advancements, the study warns that privacy breaches are most plausible when model outputs are combined with external data sources. This highlights the need for empirical simulations and more diverse training cohorts to accurately assess and mitigate real-world risks. The researchers argue that without rigorous design and transparency, the benefits of FMs could be undermined by privacy concerns.

Policy and Regulatory Frameworks

From a policy perspective, the study highlights the need for updated legislation to address AI-specific risks. The Health Insurance Portability and Accountability Act (HIPAA) in the U.S. may require revisions to account for the unique challenges posed by foundation models. Similarly, the European Union’s General Data Protection Regulation (GDPR) provides a strong framework for data protection, requiring lawful, fair, and transparent data processing. Under GDPR, individuals have the right to access, correct, erase, or transfer their personal data, and data controllers must demonstrate compliance through technical and organizational measures.

The recently enacted EU AI Act adds another layer of regulation, emphasizing the importance of data privacy for health-related AI systems. The Act introduces risk-based evaluations of AI algorithms, with specific obligations for high-risk systems, such as ensuring they are unbiased and non-discriminatory. It mandates that providers of general-purpose AI models disclose training data summaries, technical documentation, and risk mitigation strategies. The Act also prohibits certain uses of AI, such as real-time remote biometric identification in public spaces, unless under strict authorization.

Complementing the AI Act, the EU Cyber Resilience Act (CRA), announced in late 2024, imposes stringent cybersecurity requirements on digital products, including healthcare tools. The CRA mandates that manufacturers maintain a Software Bill of Materials, address product vulnerabilities, and report incidents within 24 hours. Products are categorized by criticality, with stricter conformity assessments for more important systems.

Interdisciplinary Collaboration and Real-World Applications

The study stresses the importance of cross-disciplinary collaboration among regulators, researchers, and clinicians to establish best practices in AI-driven healthcare. Ensuring both privacy and fairness in FMs demands not only technical innovation but also intentional coordination between clinicians, data scientists, ethicists, and legal experts.

Effective collaboration should be structured around three core pillars: co-design protocols that involve domain experts during model development, shared governance frameworks such as joint data use agreements and ethics review boards, and ongoing feedback loops between model users and developers. These structures aim to iteratively assess fairness impacts and ensure that mitigation strategies are transparently reported.

An example of these principles in practice is a multi-institutional ophthalmology consortium that developed a privacy-preserving foundation model to predict the progression of a rare retinal neurodegenerative disorder. The model used federated learning to train across sites without centralizing patient data. Data underwent PII scrubbing, differential privacy transformation, and minimization to retain only essential clinical features. A feature disentanglement module further separated disease-relevant signals from sensitive attributes, enhancing generalizability and reducing privacy risks.

During inference, the model used input sanitization to block identifiable information, and differential privacy techniques limited individual data influence. This real-world application demonstrates how technical and governance strategies can be operationalized to protect patient privacy while advancing medical AI.

As the regulatory landscape continues to evolve, the study emphasizes the need for a thorough strategy to mitigate re-identification risks. Technical safeguards, such as feature disentanglement, should be prioritized to isolate clinically relevant patterns from identifiable attributes. The adoption of federated learning minimizes vulnerability by training models on decentralized data without centralizing it. Furthermore, using rigorously validated synthetic data provides an additional layer of protection by reducing exposure of real patient records.

With the full implementation of the EU AI Act expected by 2026, the study argues that models must not only be compliant by policy but also private by architecture. This requires embedding privacy and fairness considerations into the FM development pipeline from the outset. By doing so, developers can uphold both the privacy rights and equitable treatment of patients as AI-driven medical imaging continues to advance.

Comments

No comments yet

Be the first to share your thoughts