Artificial intelligence is no longer confined to isolated research labs or data science environments. As of 2026, AI has become a core operational component within enterprise software, driving decision-making, automation, customer experiences, and real-time analytics. However, organizations are increasingly recognizing that traditional development platforms were not built to handle the unique demands of AI workloads at production scale. This realization has sparked the rise of AI-native development platforms, which are specifically designed to manage the complex infrastructure needs of machine learning and generative AI systems.

Operational Challenges with Legacy Platforms

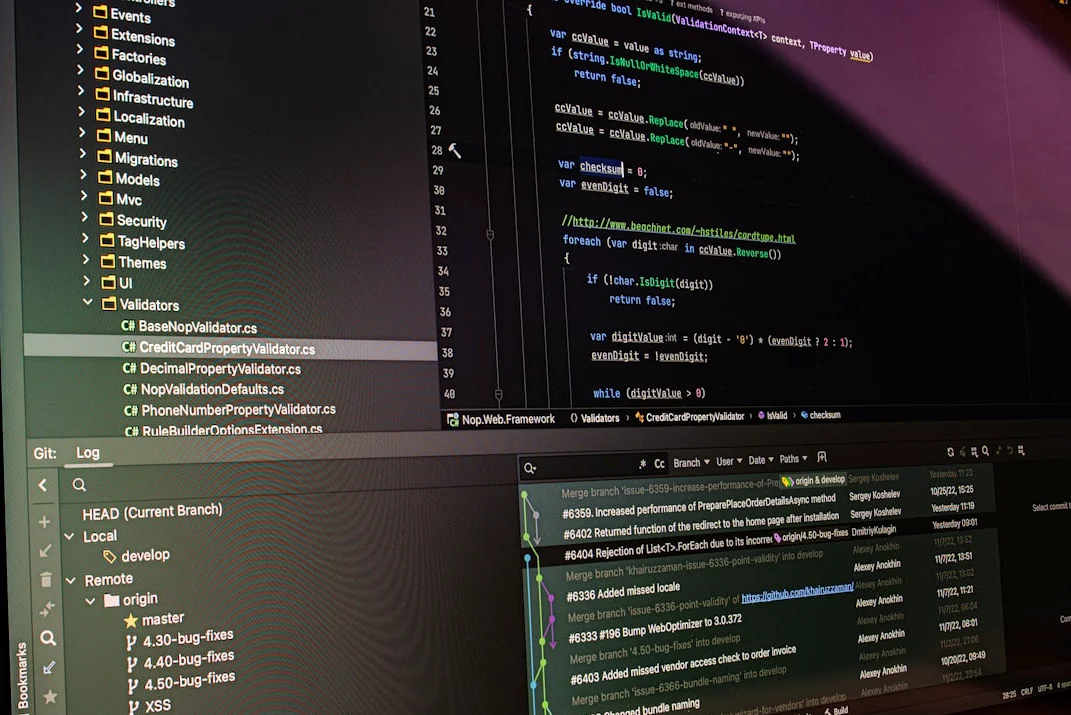

Historically, enterprise applications were built using platforms optimized for web applications, databases, and transactional workloads. These environments are well-suited for traditional software but fall short when handling the massive data volumes, high-performance computing, and distributed training environments required by AI. Data scientists and engineers have often relied on a patchwork of separate tools for data preparation, model training, deployment, and monitoring. This fragmented ecosystem becomes increasingly difficult to manage as AI projects grow in scale and complexity.

Organizations frequently encounter challenges such as data silos, inconsistent tooling, and fragmented pipelines. When teams attempt to integrate these capabilities manually, the result is a patchwork architecture that slows down development and increases operational complexity. This has led to a growing need for AI-native development platforms that consolidate these components into a single, unified environment.

AI-native platforms are specifically designed to support machine learning workflows rather than adapting traditional software development tools. These environments integrate the core components required to build, train, deploy, and manage AI systems. Key capabilities typically include built-in support for data pipelines, model orchestration, GPU acceleration, and automated deployment. By consolidating these capabilities into a single environment, organizations can accelerate AI development while maintaining operational control.

Strategic Advantages of AI-Native Platforms

The rise of AI-native platforms reflects a broader shift in how enterprises approach infrastructure. Rather than assembling infrastructure piece by piece, companies are adopting platforms that deliver AI capabilities as a cohesive system. This approach simplifies several aspects of AI deployment, including operational complexity, collaboration, and development speed.

First, it reduces operational complexity. Engineering teams no longer need to manage dozens of separate tools for data pipelines, training infrastructure, model registries, and deployment systems. Second, it improves collaboration. Data scientists, ML engineers, and software developers can work within the same environment, sharing pipelines, models, and datasets. Third, it accelerates development cycles. Automated pipelines and scalable infrastructure allow organizations to move from model experimentation to production deployment much faster.

These advantages are becoming increasingly important as AI moves deeper into core business operations. For example, large language models, retrieval-augmented generation systems, and autonomous AI agents require complex infrastructure stacks. Managing these components separately introduces significant engineering overhead. AI-native platforms provide pre-integrated pipelines that simplify building and deploying these advanced AI systems.

Many platforms now include built-in support for vector databases, knowledge retrieval pipelines, orchestration frameworks, and model optimization. These capabilities allow organizations to build sophisticated AI applications without managing every infrastructure component individually. As AI adoption expands across departments, these advantages help organizations maintain operational efficiency while scaling innovation.

Future of AI Infrastructure

The evolution of AI infrastructure is still in its early stages. Over the next several years, AI-native platforms are expected to become even more sophisticated. Future developments will likely include deeper integration between data platforms and AI systems, automated model optimization, improved hardware abstraction layers, and enhanced governance frameworks.

As enterprises continue embedding AI into their operations, platforms built specifically for AI development will play a central role in supporting this transformation. Rather than adapting traditional infrastructure to accommodate machine learning, organizations are increasingly adopting platforms where AI is the foundation of the entire system. For companies looking to scale AI successfully, the move toward AI-native development environments may prove to be one of the most important infrastructure shifts of the decade.

In addition to the technical evolution of AI-native platforms, the strategic importance of securing AI infrastructure is evident in recent industry developments. For instance, the landmark $27 billion, five-year infrastructure agreement between Meta and Nebius highlights the growing demand for specialized AI hardware and cloud services. This partnership, announced on March 16, 2026, involves Nebius supplying Meta with dedicated data center capacity to power its next-generation AI models, including access to Nvidia’s upcoming Vera Rubin chips.

Meta’s commitment to AI infrastructure highlights the immense computational demands of training increasingly sophisticated open-source models, such as the Llama series. The company is reportedly planning up to $135 billion in AI-related capital expenditures this year alone, a figure that dwarfs the market capitalization of many Fortune 500 companies. By partnering with Nebius, which has expanded its data center footprint significantly, including a new 1.2-gigawatt campus in Missouri, Meta secures a reliable pipeline of high-performance computing without the need to build and manage all new physical data centers itself.

“We are pleased to expand our significant partnership with Meta as part of securing more large, long-term capacity contracts to accelerate the build-out and growth of our core AI cloud business,” said Nebius CEO Arkady Volozh. This partnership not only reinforces Nebius’s position as a premier global ‘neocloud’ provider but also illustrates the growing importance of AI-native development platforms in the broader infrastructure landscape.

As the demand for AI-native platforms continues to rise, the integration of specialized infrastructure, hardware, and cloud services will become increasingly critical for enterprises aiming to stay competitive in the AI-driven future. The shift toward AI-native development environments is not just a technical evolution but a strategic imperative for organizations looking to scale AI effectively and sustainably.

Comments

No comments yet

Be the first to share your thoughts